Weatherford : Predictive Intelligence

Role

Lead Designer · Enterprise B2B SaaS

Year

2026

01 — The Context: High Stakes at Scale

The platform is an enterprise system used by operators to manage thousands of high-value assets simultaneously. At its core is a predictive alarm system: machine learning models analyze behavior and fire alerts when they detect patterns associated with future equipment failure.

In this domain, these alarms provide a 60 to 90-day window to act before a failure becomes critical. This isn't just a buffer—it's the entire margin. A single undetected failure can cost millions in lost production and emergency repairs.

02 — The Problem: Three Layers of Failure

The challenge wasn't just a missing tool; it was a chain of compounding failures that were invisible until we mapped them.

Surface (Fragmented Investigation): Engineers investigated alarms by navigating across disconnected pages while maintaining personal Excel sheets to document findings. Every case started from zero.

Structural (A Model with No Feedback): The predictive model fired alarms but had no way to receive outcomes. If an alarm was wrong, the data simply disappeared. The model never learned it was wrong.

Strategic (Stagnating Intelligence): A predictive model that cannot learn from outcomes plateaus in precision. For an operator, a stagnating model is a measurable risk exposure.

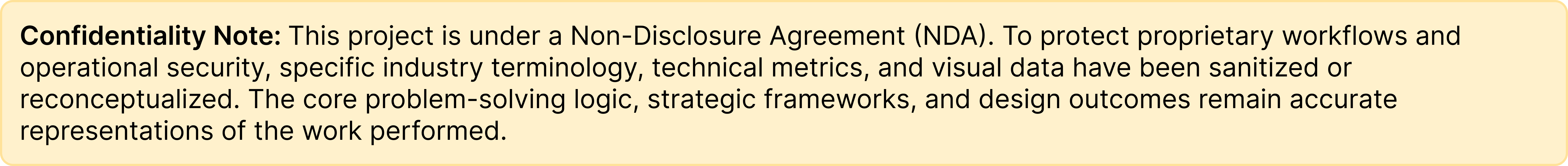

03 — Discovery: The Confusion Matrix Reframe

Through working sessions with Subject Matter Experts (SMEs), I analyzed the manual Excel workarounds engineers were using. I introduced the Confusion Matrix as a design framework to map the gaps.

This reframing shifted our goal from "building a better form" to "building a system that participates in the investigation." We realized that "False Negatives"—where the model misses a failure entirely—had to be treated as a first-class problem, even if scoped for future development.

04 — Design Principles

The system carries the load: If the system knows the data, the engineer should never have to find it. Context is surfaced automatically.

Evidence over speed: A checkbox produces a decision, but an investigation produces evidence. We designed to "slow engineers down" enough to be right, rather than making it easier to be wrong.

Design for the ceiling: Every architectural decision was made for future scale, ensuring we didn't build ourselves into a corner.

05 — Design Explorations: Defending the Architecture

These weren't just visual iterations; they were architectural arguments I defended to ensure long-term system health:

Workspace vs. Checkbox: Stakeholders suggested a simple checkbox for "Alarm Valid." I argued for a workspace because "Alarm Invalid" without supporting evidence is noise, not signal. To retrain AI, we needed the "Why."

Navigation Rail Placement: Placing investigation inside the "Alarm Hub" was the easier move. However, since "False Negatives" have no alarm, putting the feature there would make capturing the most expensive failure mode structurally impossible. We moved it to the global navigation rail.

06 — The Solution: Closing the Loop

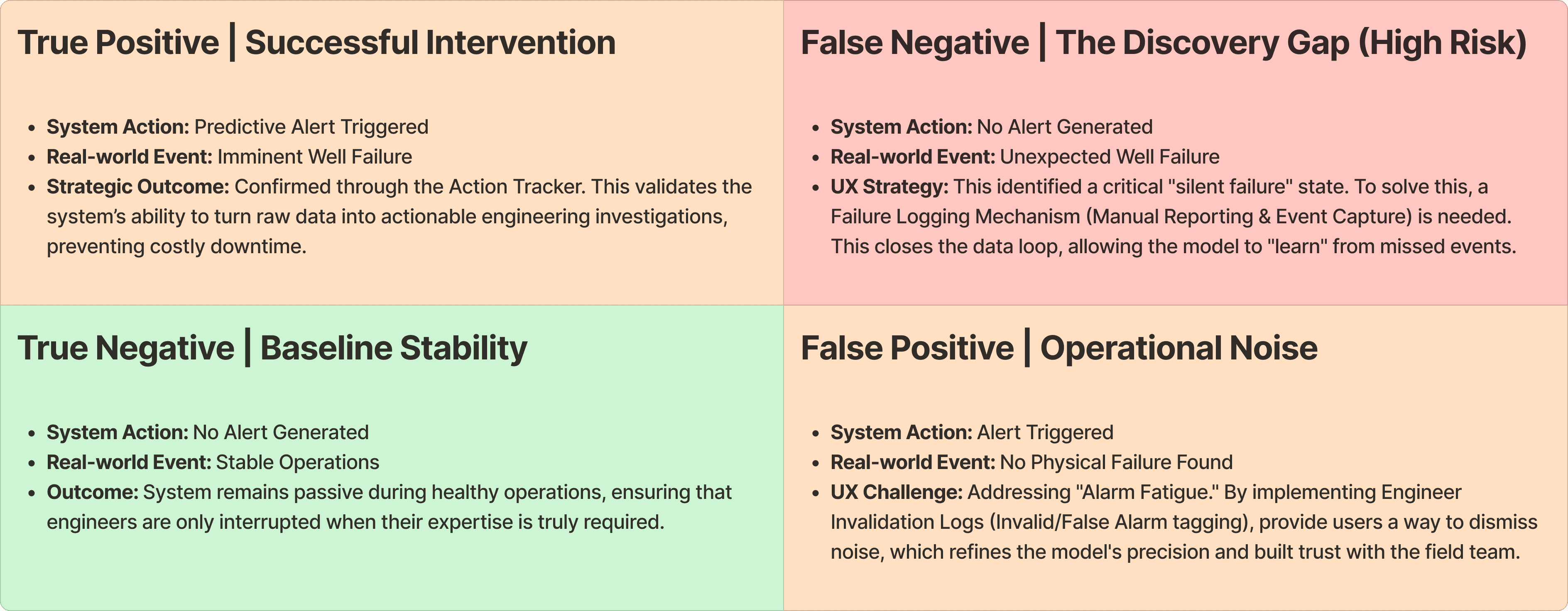

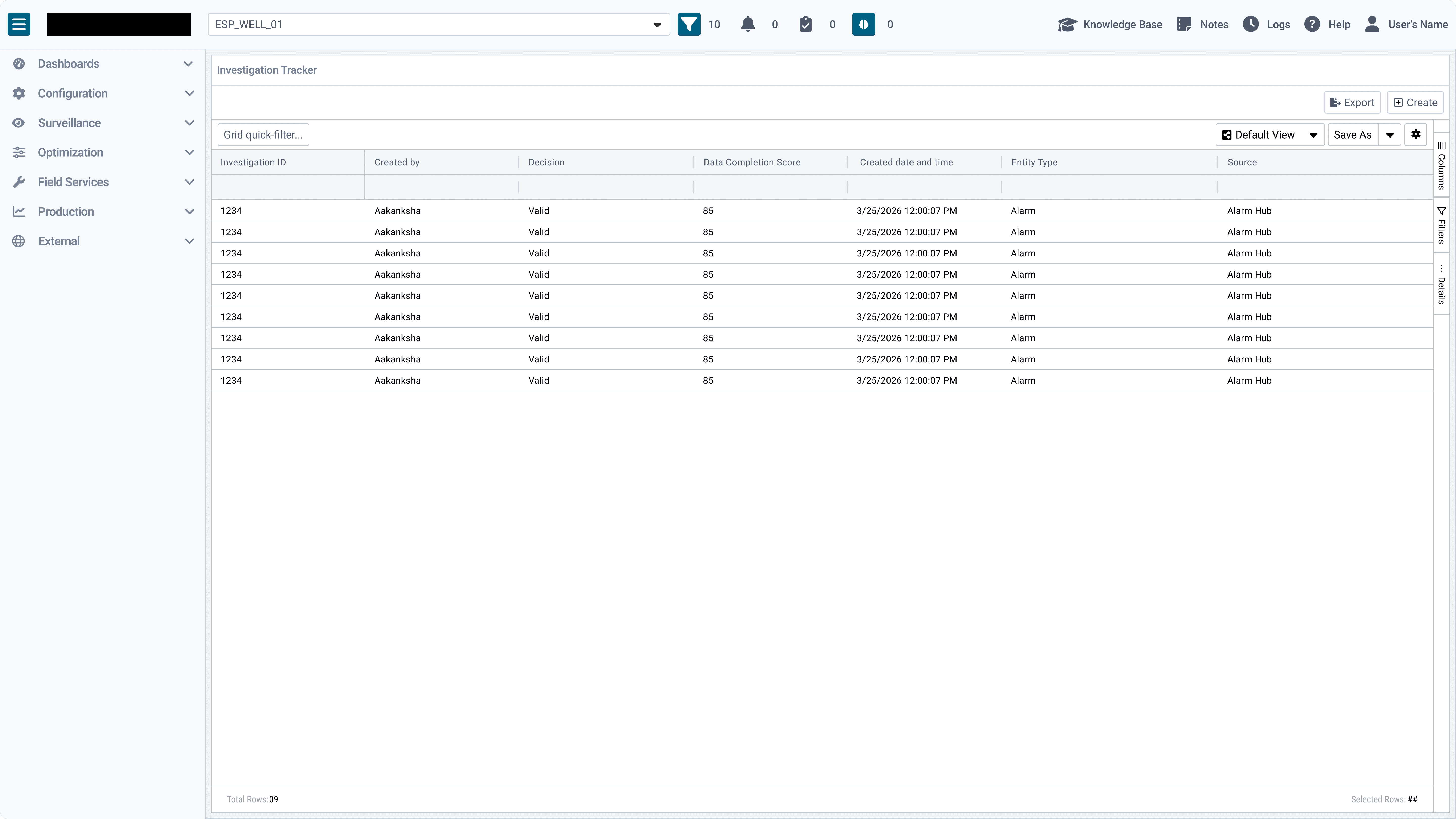

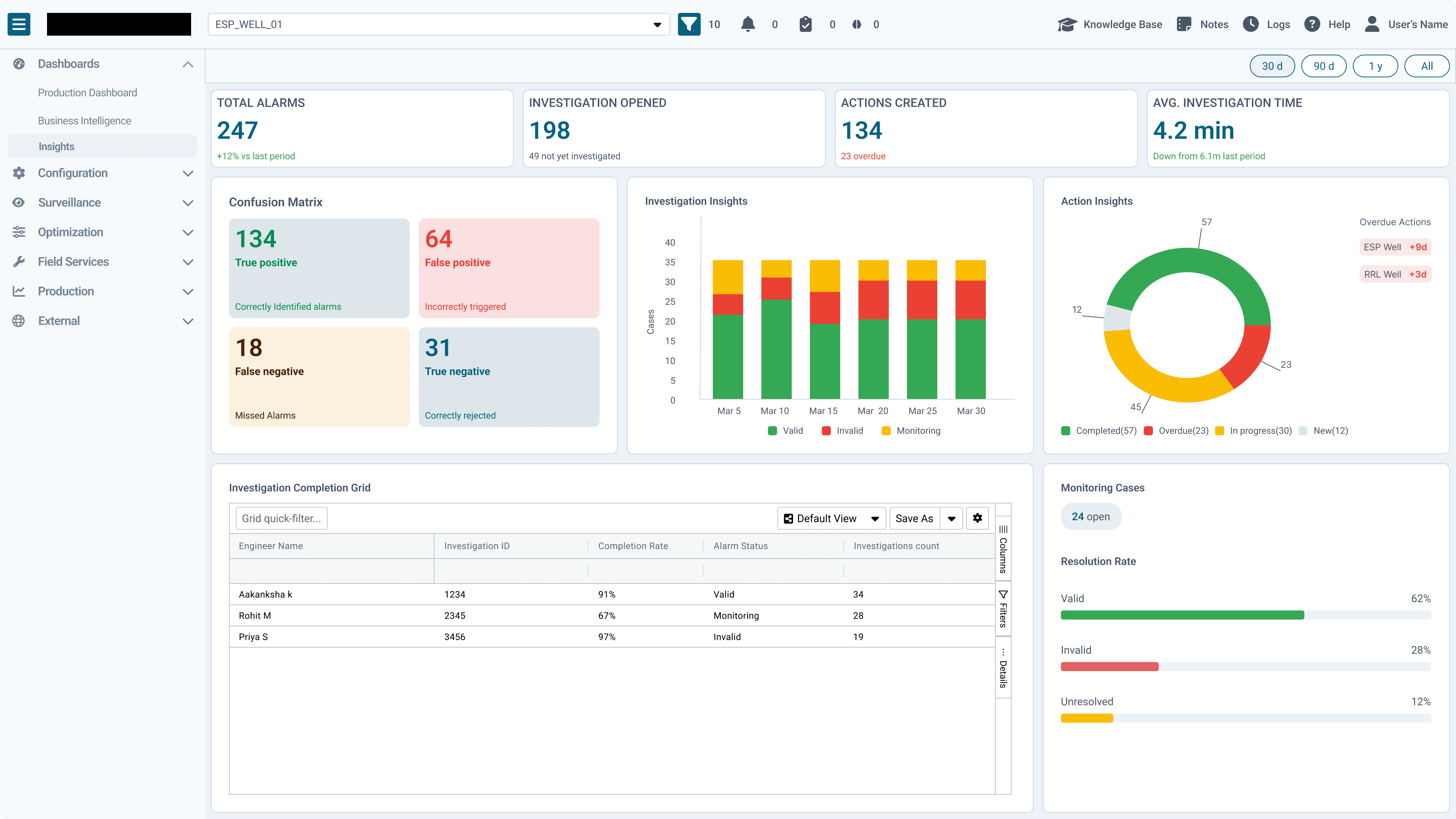

The final system consists of an Investigation Grid (the team's shared memory), an Adaptive Workspace, and an Analytics Dashboard.

Screen 01 (The Grid): Answers the question: "Has someone already investigated this?"

Screen 02 (The Workspace): A three-panel layout providing context-aware telemetry (directional deltas vs. daily averages) and "Similar Cases" that the engineer never has to search for.

Screen 03 (The Dashboard): The Confusion Matrix moves from a design framework to a live operational metric, tracking True/False Positive rates over time.

Scope of Work